What is the essence of Node.js?

Node.js is a JavaScript

runtimebuilt on Chrome’s V8 JavaScriptEngine.

JavaScript is easy to understand. But what about Runtime and Engine? How do they relate?

A JavaScript Engine can be simply defined as: A program or library that executes JavaScript code, providing mechanisms for object creation, function calls, etc. It can be a simple interpreter or a JIT compiler to bytecode.

Of course, since this entire article aims to answer “What is Node.js,” and understanding the JavaScript Engine is already 50% of the answer, we’ll explain it again — more complicatedly and verbosely — starting from even more fundamental concepts like Interpreter and Compiler.

Interpreter and Compiler

- Interpreter (of language A): A component that directly executes a piece of code (written in language A). You could think of the CPU as an interpreter of its instruction set. Today, an interpreter is usually understood as directly executing a program from source code without converting to machine code.

- Compiler: Translates from language A to language B such that execution yields equivalent results. Usually used to translate source code from a high-level language A to a lower-level language B that is easier to

interpret, and of course, the easiest language to interpret is machine code.

Compile time is usually not small, especially when optimizing the result. To reduce compile time, one approach (trading off execution speed after compilation) is not to translate directly to machine code but to an intermediate language and use an interpreter for that intermediate language — commonly called bytecode. The time to compile from source to bytecode is faster than to machine code, execution is faster than directly interpreting source code, though slower than machine code.

Now interpreter and bytecode in the JavaScript Engine definition are clear. What about JIT compiler?

To understand JIT compiler, we need to view it in relation to another concept: AOT compiler.

Ahead-of-Time (AOT) and Just-in-Time (JIT) compilation: The “time” referenced here is runtime. AOT compiles the entire source code before the program starts running; JIT compiles source code during runtime. The philosophy of JIT compilation is: instead of waiting for the compiler to translate all source code — which can take a long time — we compile the parts we need first and start running with them immediately.

Interpreter and compiler can combine to form the engine of a language in one of two ways:

- AOT combined with interpreter: Source code is fully compiled into machine code or bytecode before execution, then an interpreter executes it. For example, Python is AOT-compiled to CPython bytecode in a flash, and CPython runs on an interpreter.

- JIT combined with interpreter: Fully compiled code runs faster but takes more time before starting; directly interpreting source code starts immediately but runs slowly. The compromise: use an interpreter to quickly start executing source code, then use a JIT compiler to translate and replace source code with compiled code once it’s ready.

V8 Engine

V8 is an open-source JavaScript engine written in C++, developed by Google as part of the Chromium project, first released with the initial version of the Chrome browser. V8 compiles JavaScript directly to native machine code instead of using interpreting bytecode in the traditional way.

Node.js was initially built on V8 due to its astonishing execution speed compared to previous JavaScript engines — fast enough to power a high-performance server-side system. Today, other vendors’ engines have caught up with V8. Node.js has also released a version using Microsoft’s Chakra Engine at https://github.com/nodejs/node-chakracore, but when talking about Node.js, V8 is still the standard reference.

The name V8 Engine — chosen by Google — is emotionally evocative, conjuring images of powerful car engines producing massive output from an 8-cylinder V-shaped design, rarely under 3.0L displacement and sometimes exceeding 8.0L, like the engine in the Audi R8.

Google’s engineers likely love cars, because they continued naming components within their V8 engine Crankshaft, TurboFan, and Ignition — key technical components of modern internal combustion engines that we’ll encounter later in this article.

But enough digression. The three key factors behind V8’s high performance are:

Fast Property AccessDynamic Machine Code GenerationEfficient Garbage Collection

Fast Property Access

JavaScript is a dynamic programming language, meaning properties can be added, removed, or changed at runtime. Most JavaScript Engines use a dictionary-like data structure to store object properties — every property access requires a dynamic dictionary lookup to find the property’s memory location, slower than direct property access in traditional class-based languages.

To avoid dynamic lookup, V8 automatically creates a hidden class for each object, turning JavaScript objects into class-based ones. Each time a property is added to an object, V8 creates a new hidden class and transitions the object to this new class.

Once JavaScript objects become class-based, a classic compiler optimization technique becomes viable and is incorporated into V8: Inline Caching, boosting JavaScript performance by up to dozens of times in long-running programs.

Dynamic Machine Code Generation

V8 translates JavaScript source code directly to native machine code for high interpretation speed, while also being able to optimize (and re-optimize) compiled code at runtime based on data collected from a profiler.

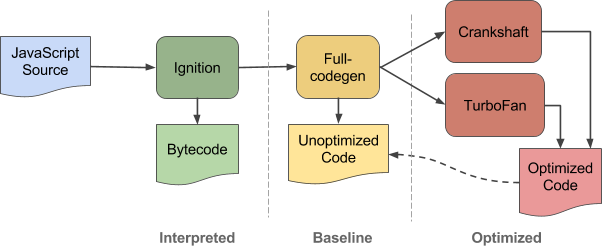

V8 has two compilers. Initially, all your source code is parsed, converted to AST, and fed into the Full-Codegen Compiler, which produces the first machine code version of your program.

Full-Codegen Compiler: Its job is to turn your source code into machine code as fast as possible — no optimization whatsoever. It also injects some type-feedback code to collect information for later optimization. This compiler performs no analysis and knows nothing about data types in the source code at this stage.

To improve performance, V8 continues monitoring the program at runtime via a profiler — a component in V8’s architecture that collects information to find which functions are hot functions (called repeatedly many times). That’s when optimization is needed for better compiled code. And that’s when Crankshaft steps in.

Optimizing compiler: Crankshaft (and more recently TurboFan) makes predictions about functions based on profiler data, re-compiles, and replaces unoptimized code via on-stack replacement (OSR).

If the assumptions are wrong — for example, it assumed a and b would always be number in a + b somewhere and used direct numeric addition instead of checking types and using the appropriate + operator each time, but then b suddenly receives a string — it simply de-optimizes and falls back to the unoptimized code.

This is how V8 treats our source code:

Image source: v8project.blogspot.com

In 2016, an interpreter named Ignition was added to V8 with the goal of reducing memory usage on memory-constrained systems like Android.

Efficient Garbage Collection

V8’s garbage collector is advertised as a stop-the-world, generational, accurate garbage collector that reclaims memory from objects no longer in use by the process very efficiently. How efficient, why, or whether it’s truly more efficient than other engines — I honestly can’t say.

Initially, JavaScript was designed to perform small, short-lived tasks — like attaching an event listener to a browser element. The engine back then was simply an interpreter reading and executing JavaScript source code. Over time, we demanded more from it.

The first application to entrust heavy tasks to JavaScript was Google Maps. From there, people realized the need for faster JavaScript in longer-running runtimes.

JIT compilation takes more initialization time than interpretation but is much faster in long runtimes. With their JavaScript-heavy web applications, Google invested heavily in pushing browsers to improve JavaScript performance, and V8 was born as a result.

Now, the longer a JavaScript process runs, the more optimized and higher-performing it becomes. People felt it was viable for a more demanding environment than the web browser: server-side.

JavaScript Runtime

Introduced as a JavaScript Runtime, how does Node.js differ from a JavaScript Engine? Inspired by a StackOverflow answer, we can roughly understand it as follows:

JavaScript runs inside a container — a program that takes your source code and executes it. This program does two things:

- Parses source code and executes each executable unit.

- Provides some objects so JavaScript can interact with the outside world.

The first part is called the Engine. The rest is called the Runtime.

In practice, V8 implements ECMAScript according to the standard, meaning anything outside the standard is absent from V8.

To interact with the environment, V8 provides template classes that wrap objects and functions written in C++. These C++ functions can read/write the file system, perform networking operations, or communicate with other system processes. By setting up a JavaScript context with a global scope containing JavaScript instances created from these templates and running our source code within this context, our code is ready to interact with the world.

And that’s the job of a Runtime Library: create a runtime environment providing built-in libraries as global variables for your code to use during execution, accept source code as an argument, and execute it in the created context.

In a browser runtime environment like Chrome, the context Chrome provides to V8 includes global variables like window, console, DOM object, XMLHttpRequest, and the timer setTimeout().

All of these come from Chrome, not from V8 itself. Instead, V8 provides the standard built-in objects present in every JavaScript environment, described in the ECMAScript Standard, including data types, operators, and special objects and functions such as value properties (Infinity, NaN, null, undefined), Object, Function, Boolean, String, Number, Map, Set, Array, parseInt(), eval(), etc.

Leaving the browser world, leaving the DOM behind, Node.js brings us many more built-in libraries like fs for file system interaction, http and https for networking, tls, tty, cluster, os, etc. The issue is, we don’t always need all of these — creating a context with so many unnecessary global variables clearly isn’t a great approach.

Node.js therefore groups functionality into separate modules and implements a module loading mechanism via the require and exports keywords, enabling more flexible contexts. Naturally, this mechanism is implemented in C/C++.

That’s the explanation behind Node.js’s introduction as a runtime built on Chrome’s V8 JavaScript Engine, and that’s how your JavaScript code interacts with low-level APIs in a synchronous manner. V8 runs your code in a single thread, sequentially, instruction by instruction, using a structure to manage active subroutines called the call stack.

Call Stack and Event Loop

The call stack isn’t something new — we’ve always needed it to ensure correct program execution. It’s just that in high-level languages, providing a call stack is hidden and automated. If we push too many stack frames and exhaust the space allocated to the call stack, we face the legendary Stackoverflow.

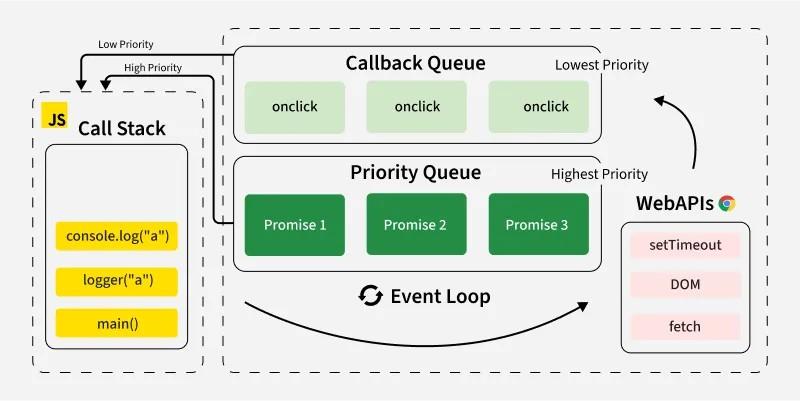

The story of JavaScript’s call stack has been told many times. We all know a stack frame is pushed every time a function is called and popped on return. After sequentially processing all instructions in the program, the call stack becomes empty. A miracle called the event loop picks up callback functions from a construct called the event queue (or task queue), pushes them onto the call stack, and the V8 engine continues executing the function now sitting on the call stack.

This is how JavaScript performs asynchronous calls. This is also why, even when calling setTimeout() with a delay of 0, our callback still has to wait until all code in the program finishes executing (the call stack becomes empty) before being invoked.

Image source: geeksforgeeks.org

V8 receives the event loop as an input parameter when initializing the environment. Different environments have their own event loop and API for creating asynchronous requests — which push our callback function into the event queue and idly wait.

For Node.js, its event loop implementation is libuv. Without libuv, the picture of an event-driven, asynchronous, non-blocking I/O Node.js would be incomplete. V8 doesn’t even know what I/O is, let alone blocking or non-blocking.

Comments